The SAS SBC-5 Standard: What It Actually Means for People Running Storage at Scale

If you manage storage in a modern data center, you don’t experience standards as documents—you experience them as uptime, performance, and fewer late-night emergencies.

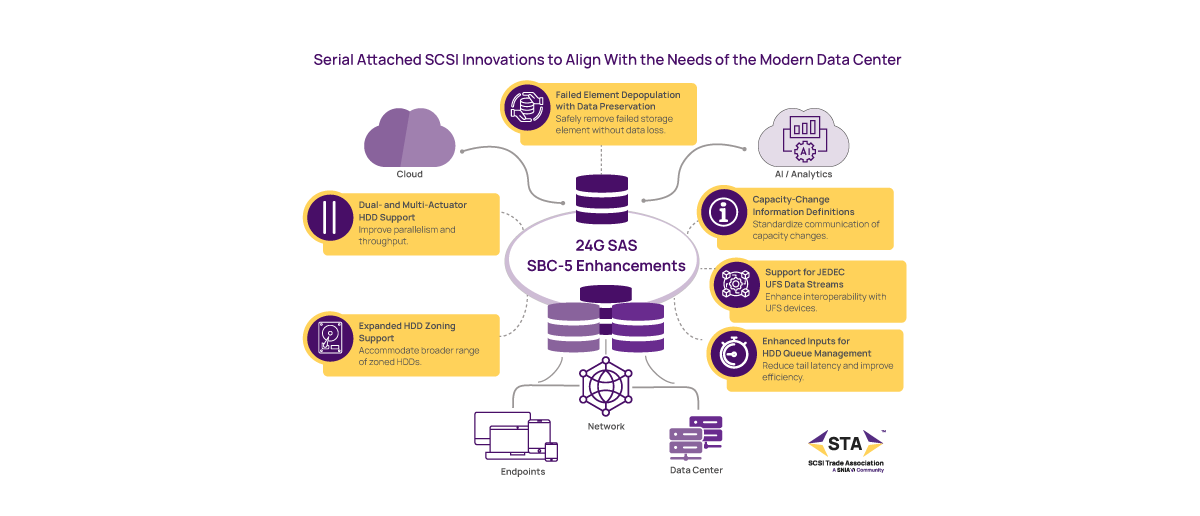

That’s what makes the release of SCSI Block Commands – 5 (SBC-5) interesting. On paper, it’s an incremental update to a mature and proven standard. In practice, it addresses some very real operational pain points in large-scale environments.

Instead of walking through features one by one, let’s look at what SBC-5 changes from a user’s point of view.

When a Drive Fails, Everything Doesn’t Have to Stop

One of the most practical improvements in SBC-5 is failed element depopulation with data preservation.

In plain terms: when part of a drive fails, you no longer have to take the entire device offline, reformat it, or risk losing data. The system can remove the failed portion and keep running—just with slightly reduced capacity.

Why this matters:

- Less disruption during failures

- Faster recovery times

- Fewer full rebuild events

For operators, this could be the difference between a contained issue and a cascading incident.

More Performance Without More Drives

SBC-5 introduces better support for dual- and multi-actuator HDDs, which are designed to increase parallelism inside a single drive.

What you experience:

- Higher IOPS per terabyte

- Better throughput from existing infrastructure

- Improved efficiency at scale

Instead of scaling performance by adding more devices, you get more out of each one.

More Control Over “Noisy” Workloads

Storage teams know the pain of unpredictable latency—especially in mixed or hyperscale workloads. Another area where operators feel pain is latency management. SBC-5 enhances Command Duration Limits (CDLs) for HDDs, giving hosts more control over how long their operations are allowed to take.

What that translates to:

- Better control over tail latency

- More predictable performance under load

- Improved workload balancing

This is particularly valuable in environments where consistency matters more than peak speed.

Better Fit for Modern, Sequential Workloads

With expanded zoning support, SBC-5 improves how systems work with zoned storage, especially for SMR-based HDDs.

Why users care:

- More flexibility in deploying high-capacity drives

- Better alignment with large, sequential workloads (think AI datasets, archives, logs)

- Fewer workarounds at the application layer

This is about making high-density storage easier to use.

Fewer Surprises When Capacity Changes

Capacity changes—whether due to failures, maintenance, or reconfiguration—can be messy and unpredictable.

SBC-5 standardizes how devices report those changes back to the host.

Impact:

- More transparency

- Cleaner automation

- Fewer edge-case failures in management software

In other words, your systems will behave like you desire them to.

Bridging More of the Storage Ecosystem

SBC-5 also adds support for UFS data streams, extending interoperability with devices that use Universal Flash Storage—a compact, low‑power storage standard common in mobile, automotive, and embedded systems. This addition simply helps storage software interact more cleanly with UFS‑based components when they appear in broader environments.

This is subtle but important: it reflects how storage environments are becoming more heterogeneous, and standards are keeping pace.

The Bigger Picture: Quiet Innovation That Reduces Risk

SBC-5 isn’t about flashy, headline-grabbing features. It’s about something more valuable: reducing operational friction.

Across the board, the updates focus on:

- Keeping systems running during a device failure

- Improving performance efficiency

- Giving operators better control

- Making behavior more predictable

That’s why SCSI continues to matter. In large-scale environments, stability and incremental improvement often deliver more value than disruptive change.

Or put simply: SBC-5 helps storage teams spend less time reacting—and more time operating.

For more details about SBC-5, with links to the spec, see our press release here.

Leave a Reply