Solving AI Data Challenges:

StorageAI is an open standards project for efficient data services related to AI workloads. We are addressing the most difficult challenges related to AI workloads, closing existing gaps in processing and accessing data.

- AI exposed urgent problems in data services, especially around how storage interacts with compute.

- Data pipelines are inefficient— round-tripping between storage and compute is wasting power and performance.

- Accelerators (like GPUs) go idle when data isn’t available at the right place and time—and that’s incredibly expensive

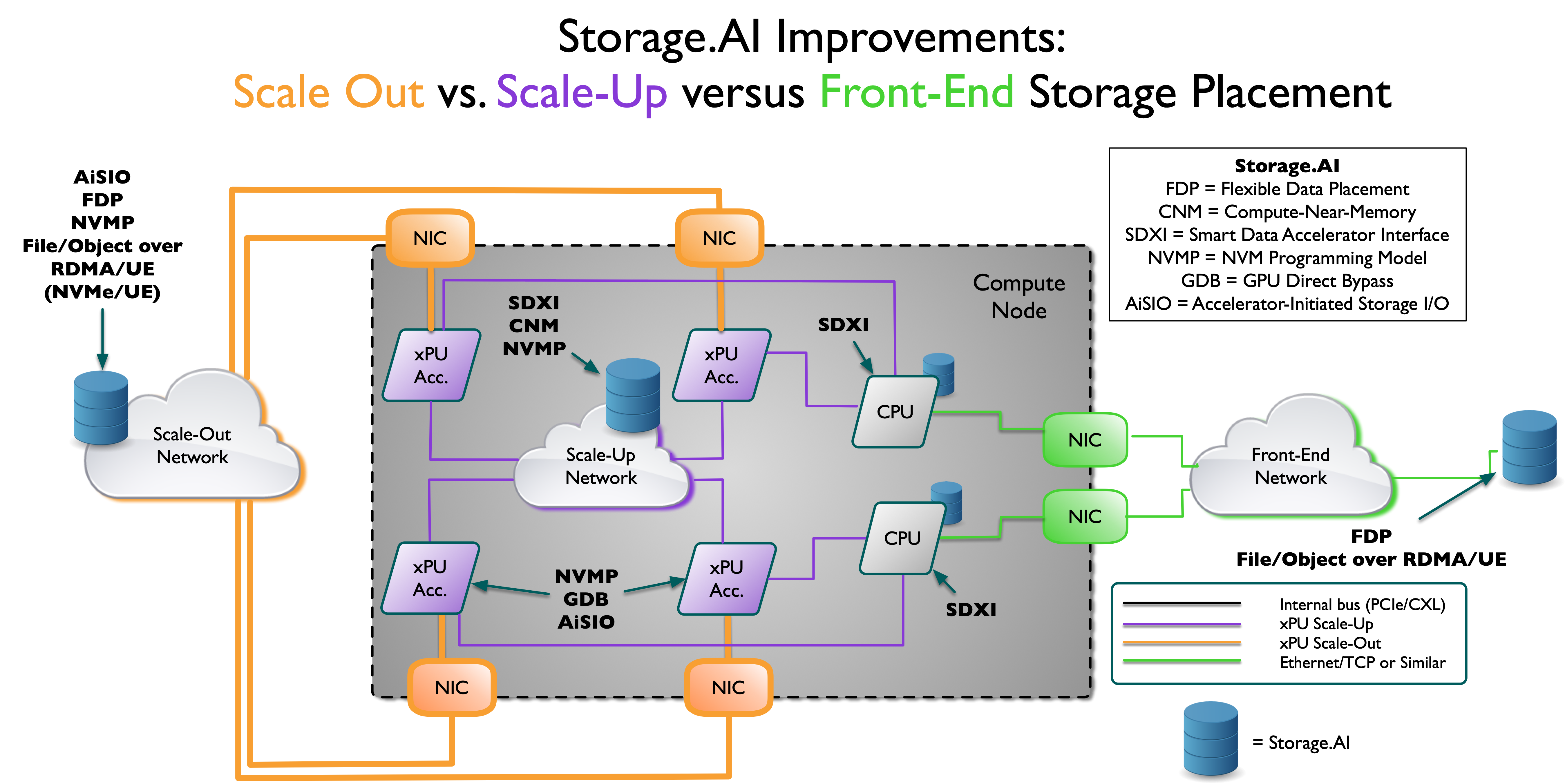

- StorageAI focuses on vendor-neutral, interoperable solutions for issues like memory tiering, data movement, storage efficiency, and system-level latency that take advantage of GPUs and AI acceleration.

Ongoing SNIA Technical Work

| Enables |

| SDXI (Smart Data Acceleration Interface) | Vendor-neutral DMA acceleration across CPU, GPU, DPU; memory-to-memory copies and transforms |

| Computational Storage API & Architecture | Allows SSDs and storage devices to perform computation (e.g. filtering, inferencing, format conversion) directly |

| NVM Programming Model | Unified software interface for accessing NVMe, SCM, and RDMA memory/storage tiers |

| Swordfish / Redfish Extensions | Unified, Redfish-compatible storage management for hybrid and disaggregated infrastructure |

| Object Drive Workgroup | Standard interfaces for object storage devices to operate in RDMA / hyperscale environments |

| Flexible Data Placement APIs | Works with computational storage and GPU-aware storage pipelines to optimize layout and streaming throughput |

Future Work Planned | |

| File/Object over RDMA and Ultra Ethernet | Enables hybrid file/object storage backends, allowing traditional applications to benefit from S3-like scalability, combined with the speed and efficiency of RDMA |

| Accelerator Direct Access Bypass | Bypasses CPU for data movement between GPU memory and RDMA/NVMe, enabling near-zero-latency I/O to accelerators |

| Accelerator-Initiated I/O | GPUs can initiate and complete their own I/O operations (e.g. checkpoint writes, data loading), reducing CPU bottlenecks. Also known as Accelerator-Initiated Storage I/O (AiSIO). |

"This isn’t just a new protocol. It’s a fundamental reimagining of how AI data moves."

Aarohi Minj,

Evolution AI Hub

"The Storage.AI project will work to build broad ecosystem support."

Chris Mellor,

Blocks & Files

"Storage.AI Project by SNIA Looks to Re-frame AI Storage Discussion."

Cliff Robinson,

ServeTheHome,

Join Us!

StorageAI is open to all! Join our open ecosystem with multiple working groups and organizations. Contact: [email protected]

Already a SNIA Member? Join the StorageAI Community Here